GSA SER Verified Lists Vs Scraping

The Foundation of Automated Link Building

Anyone running a serious GSA Search Engine Ranker campaign inevitably faces a critical decision: should you invest in pre‑verified lists or rely on scraping your own targets? The phrase “GSA SER verified lists vs scraping†sparks debate in forums and private groups because each method has a direct impact on link velocity, domain quality, and overall campaign longevity. Understanding the mechanics behind each approach is essential before committing your server resources and money.

What Are GSA SER Verified Lists?

A verified list is a pre‑built database of URLs that have already been checked for successful submission and, in many cases, for a confirmed live link. Vendors run massive harvesting and verification operations, often using dozens of proxies and captcha‑solving services, then package the results. The list typically includes the platform type (WordPress, Joomla, guestbook, etc.), the exact posting URL, and sometimes the success rate.

Core Characteristics of Verified Lists

- Ready‑to‑use out of the box – drag and drop into GSA SER

- Pre‑filtered for dead platforms, redirects, and honeypots

- Often segmented by engine type and PageRank flow

- Updated on a schedule – daily, weekly, or monthly refreshes

- Include metrics like Oblique Ratio, Trust Flow, and spam flags

The Scraping Approach Defined

Scraping means you harvest fresh target URLs in real‑time using tools such as GSA Proxy Scraper, Scrapebox, or custom Python scripts. The harvested list is then fed into GSA SER, which attempts to register and post. Success depends heavily on the quality of your footprints, the freshness of your proxies, and the captcha‑solving budget you allocate. This method places control entirely in your hands.

What Scraping Gives You

- Potentially untouched URLs that haven’t been spammed to death

- Flexibility to chase niche‑specific footprints

- No recurring list vendor cost – only your server and tool expenses

- Complete transparency over the source of every URL

- Ability to blend multiple search engines and custom sources

Comparing Efficiency: Time, Cost, and Resource Burn

When evaluating “GSA SER verified lists vs scraping,†the most immediate trade‑off is how you spend time and hardware. Verified lists eliminate the scraping phase entirely, meaning GSA SER can start posting minutes after you load them. Scraping, on the other hand, adds a resource‑hungry layer before a single backlink is built.

Resource Consumption at a Glance

Use the following table to compare the two methods quickly:

- Verified lists – Low CPU/RAM during initial load; massive threading possible immediately.

- Scraping – High CPU and proxy usage for hours per day; depends on footprint complexity.

- Verified lists – Fixed cost per list; predictable monthly budget.

- Scraping – Variable cost tied to proxy bandwidth, captcha solves, and electricity.

- Verified lists – Minimal maintenance; focus stays on posting and verification.

- Scraping – Constant need to tweak footprints as platforms die.

here

Link Diversity and Quality: The Real Differentiator

Many marketers assume scraped links are automatically better because they are “fresh.†In practice, fresh frequently means unverified. A scraped list may contain thousands of URLs where posting fails because the platform changed its architecture or has aggressive anti‑spam measures. Verified list vendors already absorb that failure rate and deliver URLs with a known success probability. However, premium verified lists can get saturated quickly when thousands of users load the same targets simultaneously.

Scraping, if done with carefully crafted, long‑tail footprints, accesses the hidden corners of the web where nobody else is submitting. That uniqueness often translates into higher indexing rates and a lower footprint of your own network. The downside is the noise – expect a 70-90% failure rate on raw harvested URLs unless your filtering is impeccable.

Footprint Control and Platform Targeting

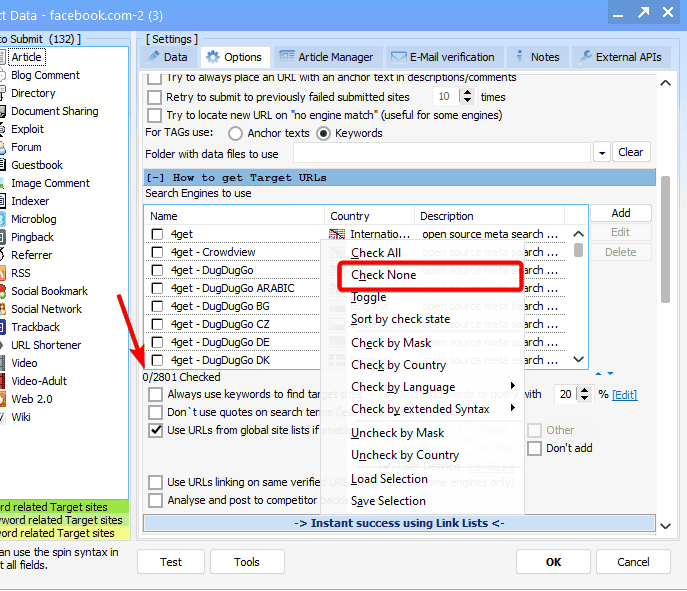

Every niche behaves differently. A verified list might give you 50,000 WordPress URLs, but if your campaign needs Edu and Gov backlinks, you are stuck. Scraping lets you build a custom gathering routine that targets specific TLDs, in‑url strings, or platforms. You can even scrape competitor backlink profiles to create a hyper‑relevant target bank. This granularity is the strongest argument in the “GSA SER verified lists vs scraping†conversation for advanced users.

Where Each Method Excels

- Use verified lists when:

- You need to blast a large volume of general links fast

- You don’t have the technical time to maintain scraping servers

- Your primary goal is anchor diversity across millions of domains

- You are running tier‑2 and tier‑3 campaigns that don’t demand elite metrics

- Use scraping when:

- You need niche‑relevant, high‑authority targets

- You are building a private blog network (PBN) feeder list

- You want to avoid footprints shared by thousands of other GSA users

- You have a dedicated server and a solid proxy infrastructure

The Hybrid Workflow: Best of Both Worlds

Smart automation often means not choosing one method exclusively. A hybrid strategy uses verified lists for the mass posting layer (tier‑3) and reserves time‑intensive scraping for the high‑value tier‑1 support links. You can also take a base verified list and run it through a custom scraper that enriches each URL with fresh metrics from Ahrefs or MOZ before letting GSA SER loose. This eliminates dead wood and combines the speed of pre‑made lists with the quality filter of scraping.

Frequently Asked Questions

Is it safe to buy GSA SER verified lists from public forums?

Safety depends entirely on the seller’s transparency. Ask for a sample and check it with a tool like URL Profiler. Avoid lists that package adult, pharma, or hacked links unless that fits your risk profile. A reputable vendor will show you the platform breakdown and the average Oblique Ratio. Public lists from unknown sources may contain honeypots designed to penalize your money sites.

How much do scraping footprints affect link quality?

Footprints are everything. A generic “powered by wordpress†footprint will give you the same garbage everyone else is hitting. Advanced operators build multi‑step footprints that chain in‑url queries, index title tags, and exclude recent spam patterns. Better footprints yield fewer duplicates and far higher acceptance rates. Without solid footprints, scraping is just a slower way to acquire low‑quality targets.

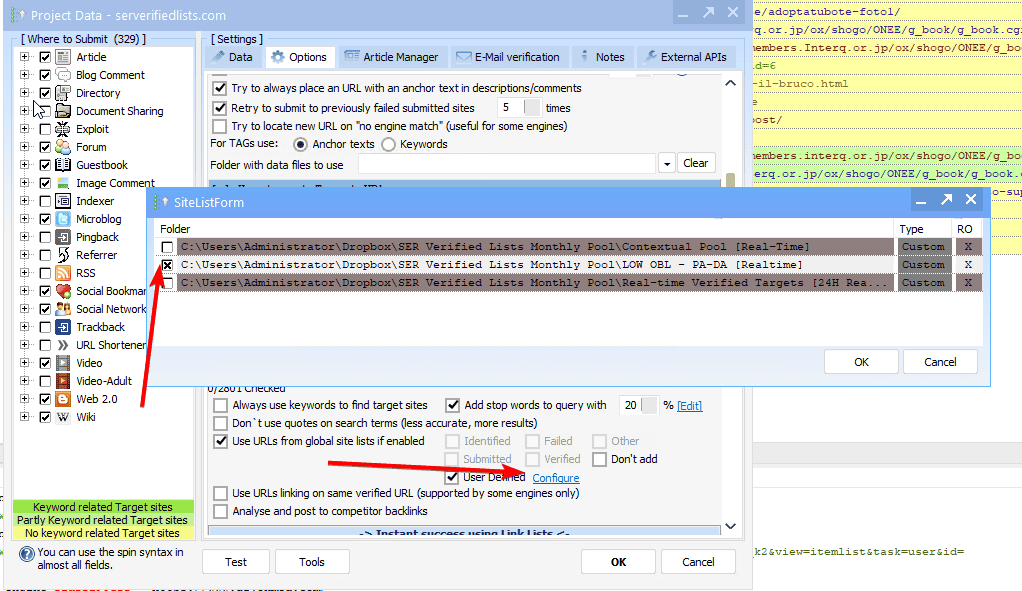

Can I use both methods in the same GSA SER project?

Absolutely. You can load verified lists into the “Import URLs†field and simultaneously enable GSA SER’s internal site lists or scrape sources. The engine will merge everything and de‑duplicate based on domain. This layered approach often yields the highest LPM (links per minute) while maintaining a healthy mix of aged and fresh targets.

Why do scraped targets sometimes cause higher captcha costs?

Raw scraped URLs haven’t been pre‑validated, so GSA SER will aggressively attempt to register or post on every entry. Many of those attempts will hit captcha walls on low‑quality sites that verified list vendors would have skipped. The result is a spike in captcha credits consumption until your failure filters learn to discard those platforms. You can mitigate this by pre‑filtering scraped lists with a lightweight headless browser check.

Do verified lists expire?

Yes. Platforms constantly update, delete spam, or move behind login walls. A verified list that is two weeks old may have a 30% lower success rate. The best vendors refresh daily, and you should always check the freshness date before purchasing. Stale lists waste proxies and hurt your inbox if you monitor email verification bounces.

Making the Final Decision

The “GSA SER verified lists vs scraping†dilemma doesn’t have a universal answer. It reflects your infrastructure budget, your tolerance for maintenance, and your niche’s saturation level. Pure list buyers enjoy convenience but risk footprint overlap. Hardcore scrapers achieve unique link graphs but burn through proxies and time. Starting with a high‑quality verified list to establish a baseline, then layering in custom scraped targets for your top tiers, remains the most balanced and scalable approach in modern GSA SER campaigns.